Popular Keywords

- About Us

-

Research Report

Research Directory

Semiconductors

LED

Consumer Electronics

Emerging Technologies

- Selected Topics

- Membership

- Price Trends

- Press Center

- News

- Events

- Contact Us

- AI Agent

Qualcomm

News

[News] Intel Recruits Qualcomm and AMD GPU Veteran Eric Demers to Advance AI Push

Intel has brought in a veteran GPU architect to strengthen its AI efforts. According to Tom’s Hardware, Eric Demers, who designed many of the leading GPUs at ATI Technologies, later acquired by AMD, and went on to lead Qualcomm’s GPU designs, has joined Intel with a focus on AI. Demers will serv...

News

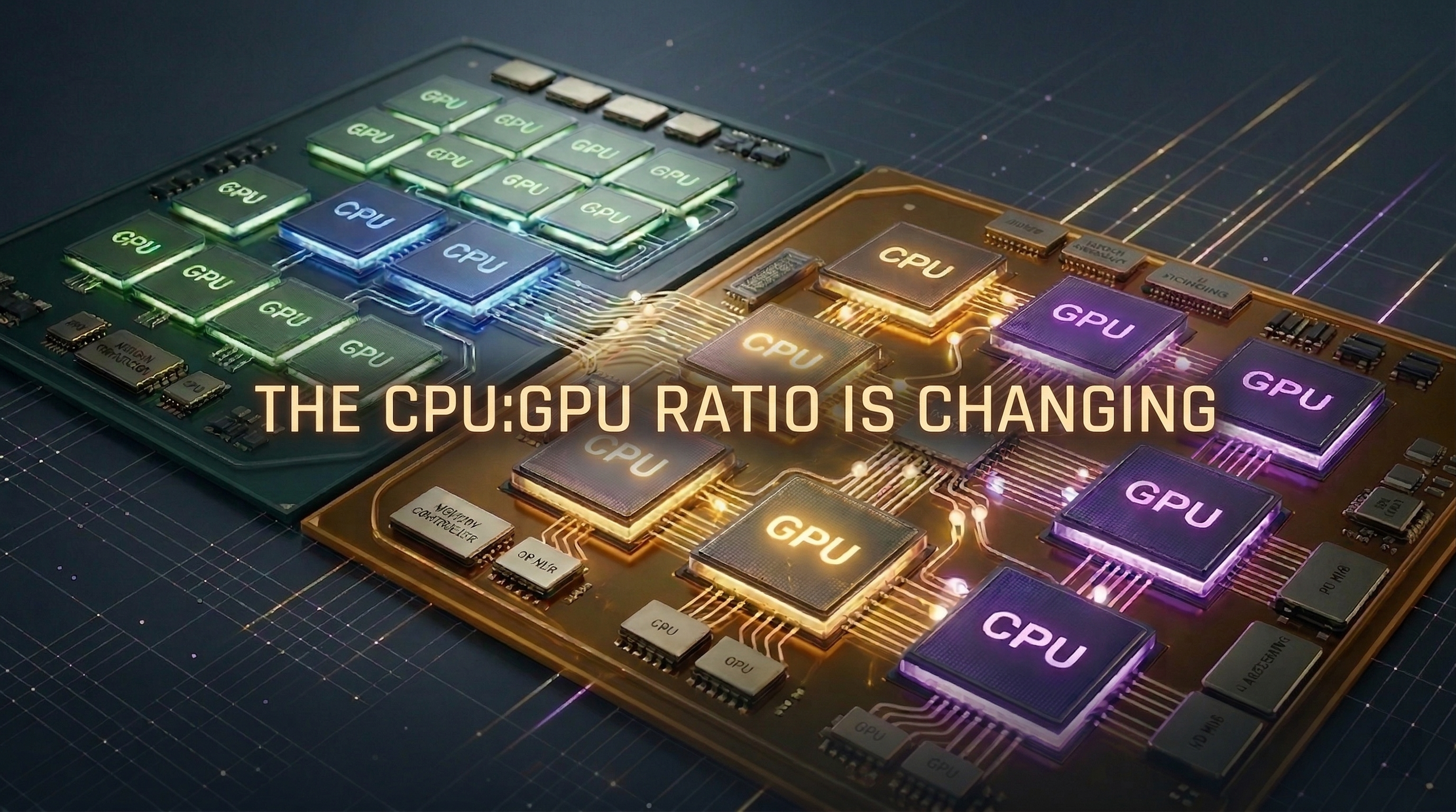

[News] AMD, NVIDIA and Others Reportedly to Debut New Chips at CES 2026, Spotlighting TSMC 3nm and 5nm

CES 2026 is set to open on January 6, with major companies expected to unveil new chips, drawing broad industry attention. According to Commercial Times, upcoming processors from AMD, Qualcomm, and NVIDIA are all slated to be manufactured by TSMC, primarily using its 3nm and 5nm process technologies...

News

[News] Samsung Reportedly Pushes In-House CPU and GPU for Exynos 2800 to Cut Qualcomm, AMD Reliance

Samsung recently unveiled the Exynos 2600 built on its in-house 2nm GAA process, signaling a renewed push in advanced manufacturing and a long-term effort to reduce reliance on Qualcomm, a shift expected to accelerate with next-gen Exynos. According to Wccftech, sources say the company is developin...

News

[News] Key Highlights to Watch at CES 2026: From Intel Panther Lake to Qualcomm’s Snapdragon X2 Elite

With CES 2026 approaching, what new releases are worth watching? According to a column by Zhu Xi in TechNews, while next year may see relatively fewer vendors launching new products, there are still several notable releases worth anticipating. The following highlights the key upcoming products in th...

News

[News] Samsung Reportedly Cuts Exynos 2600 Price by $20–30 Below Qualcomm to Spur Adoption

Samsung’s first 2nm chip, the Exynos 2600, has become a crucial test of its foundry strategy and how much it can realistically replace Qualcomm’s offerings. According to Chosun Biz, citing industry sources, Samsung plans to use a mix of Exynos 2600 and Snapdragon chips in the standard and Plus m...

- Page 2

- 25 page(s)

- 125 result(s)