Popular Keywords

- About Us

-

Research Report

Research Directory

Semiconductors

LED

Consumer Electronics

Emerging Technologies

- Selected Topics

- Membership

- Price Trends

- Press Center

- News

- Events

- Contact Us

- AI Agent

Artificial Intelligence

News

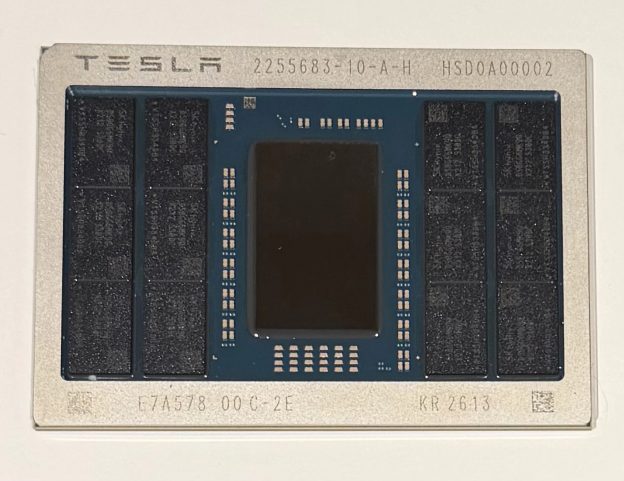

[News] Musk Confirms AI5 Tape-Out, but Wrong TSMC Tag Triggers Social Media Mix-Up

While Tesla is collaborating with Intel on its ambitious Terafab project, the company has also reached a key milestone in its next-generation AI chip program. According to Tesla CEO Elon Musk on X, the AI5 chip has officially completed tape-out. As previously reported by TechPowerUp, Tesla opts t...

News

[News] China Reportedly Closes AI Performance Gap with U.S., Stanford Report Says; Anthropic Leads by Just 2.7%

The U.S.’s lead in AI may be narrowing. According to the latest report from the Stanford Institute for Human-Centered Artificial Intelligence (Stanford HAI), China has reportedly closed the performance gap with the U.S. The report notes that U.S. and Chinese models have traded the lead multiple ti...

News

[News] AI Compute Prices Rise Across China: Tencent Joins Alibaba, Baidu in Hikes; Zhipu Raises Prices Again

Rising AI compute demand is driving a new wave of price increases, signaling that computing power is becoming an increasingly scarce resource in the AI era. According to Jiemian News, following Alibaba Cloud and Baidu Cloud, Tencent Cloud has also joined the ranks of providers raising AI compute pri...

News

[News] Huawei 2025 R&D Spending Reaches Record 192.3B Yuan, 22% of Revenue; Cloud Revenue Slips

Please note that this article cites information from Huawei, Reuters, CNBC, Alibaba, and South China Morning Post. Huawei has released its 2025 annual report. According to Reuters, the company reported 2.2% revenue growth in 2025 on Tuesday, supported by its core infrastructure network and con...

News

[News] Oracle Reportedly Slashes Thousands of Jobs as AI Spending Surges

Please note that this article cites information from CNBC, Business Insider, Reuters, and Layoffs.fyi. Oracle is reportedly undertaking job cuts. According to CNBC, sources say the company has begun informing employees that it plans to reduce its workforce by thousands. Business Insider adds tha...

- Page 1

- 58 page(s)

- 286 result(s)