Popular Keywords

- About Us

-

Research Report

Research Directory

Semiconductors

LED

Consumer Electronics

Emerging Technologies

- Selected Topics

- Membership

- Price Trends

- Press Center

- News

- Events

- Contact Us

- AI Agent

Microsoft

News

[News] Samsung Reportedly Eyes Long-Term Memory Deals with Google, Microsoft; May Include $10B+ Prepayments

Please note that this article cites information from EBN News, ZDNet, Micron, and News Tomato. Memory giants are reportedly moving toward multi-year supply agreements to enhance stability, with potential deal structures and prospective customers beginning to take shape. According to EBN News, i...

News

[News] US Reportedly Mulls Tariff Exemptions for Amazon, Google, Microsoft on TSMC-Made Chips

While many details of the semiconductor duties remain unclear, one certainty is that in January the Trump administration slapped a 25 percent levy on certain AI chips from AMD and NVIDIA. Now, the Financial Times reports that Washington plans to exempt major tech players like Amazon, Google, and Mic...

News

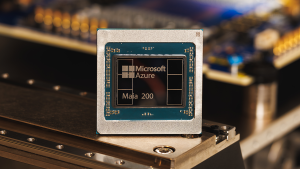

[News] Microsoft Unveils Maia 200 AI Chip on TSMC 3nm; SK hynix Reportedly Sole HBM3E Supplier

Microsoft unveiled its latest in-house AI chip, Maia 200, marking a further push into custom silicon for data-center AI workloads. According to the company’s press release, the chip is fabricated on TSMC’s 3nm process and features native FP8 and FP4 tensor cores designed to enhance performance a...

News

[News] Sony, Microsoft Reportedly Weigh Delaying Next-Gen Game Consoles Beyond 2028 Amid Memory Price Surge

Memory prices are climbing, reportedly affecting the product planning of major game console makers. According to ITHome, citing Insider Gaming, sources say console manufacturers Sony and Microsoft are weighing whether to delay the next generation of consoles beyond their originally targeted 2027–2...

News

[News] Hyperscalers Ramp Up Capex Amid AI Boom, Risks Lurk: Microsoft, Meta & Alphabet in Spotlight

As hyperscalers kick off their latest earnings reports today, capex is taking center stage amid AI bubble concerns. But for now, those worries may be on hold, as Alphabet, Meta, and Microsoft all plan to ramp up spending significantly, CNBC reports. Among them, Alphabet—Google’s parent—stan...

- Page 1

- 13 page(s)

- 65 result(s)