[News] JEDEC Nears 2nd-Gen MRDIMM as CPUs Demand More Bandwidth; Samsung, SK hynix Reportedly Accelerate Push

As agentic AI fuels a structural upswing in CPU demand, the memory architecture behind those processors is moving into equally sharp focus. As MRDIMM (Multiplexed Rank Dual In-line Memory Module) is emerging as one of the key solutions to that bottleneck, the Joint Electron Device Engineering Council (JEDEC) is now in the final stages of completing the second-generation MRDIMM standard, according to ZDNet.

According to ZDNet, HBM is integrated with graphics processing units (GPUs) to handle AI computation workloads, while MRDIMM serves as main memory directly accessed by the CPU.

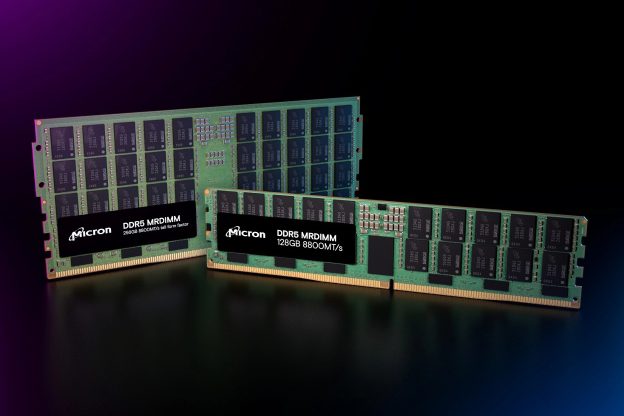

Micron as RDIMM Forerunner

ZDNet highlights that the module, designed to be optimized for AI data centers, is expected to see rapid demand expansion alongside HBM. Samsung Electronics and SK hynix have also been developing products to capture early market share, the report adds.

Notably, U.S. memory giant Micron, back in 2024, announced that it was already sampling MRDIMMs, adding that built on DDR5 physical and electrical standards, MRDIMM technology delivers a significant step forward in memory performance, enabling scalable gains in both bandwidth and capacity per core to better future-proof compute systems and meet the rising demands of data center workloads.

According to Micron, compared with traditional RDIMMs, MRDIMMs offer up to a 39% increase in effective memory bandwidth, more than 15% improvement in bus efficiency, and latency reductions of up to 40%.

MRDIMM Explained

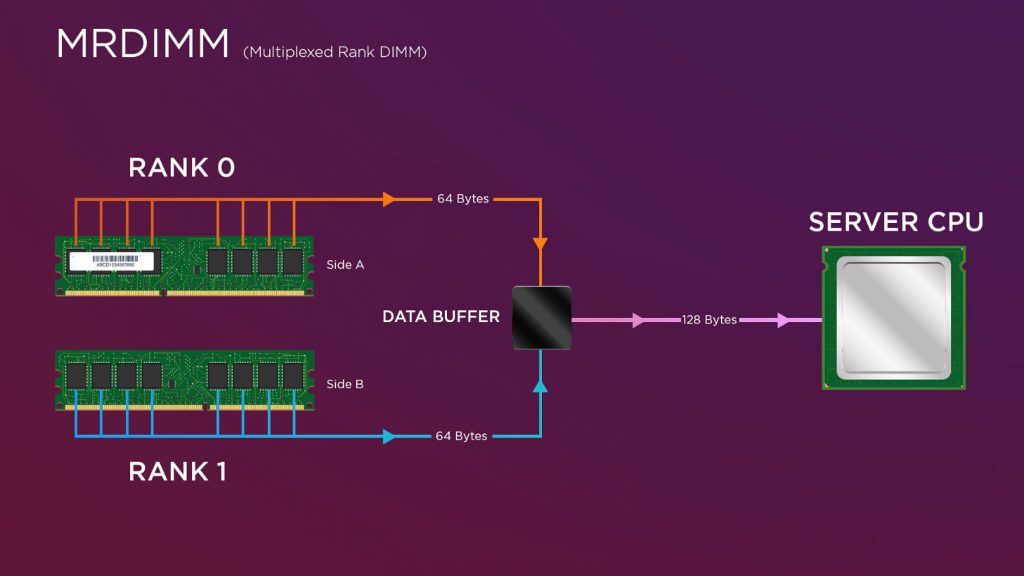

The ZDNet report explains that MRDIMM is a next-generation server DRAM module optimized for AI and high-performance computing (HPC) workloads. It enables simultaneous operation of two ranks—the basic operating unit of a memory module—delivering significantly faster data processing speeds, the report suggests.

However, the report also points out that as one of the latest technologies in the memory industry, MRDIMM has yet to undergo full standardization and commercialization. Only a limited number of CPUs currently support MRDIMM, with Intel’s Xeon 6 among the few examples, the report adds.

A Lenovo technical product guide further highlights that server designers adopting next-generation CPUs are increasingly turning to MRDIMMs to ease mounting memory bandwidth bottlenecks.

According to Lenovo, MRDIMMs can deliver up to 128 bytes of memory data to the CPU per operation, effectively doubling throughput compared to conventional DDR5 DIMMs. In DDR5 architecture, memory is organized into ranks composed of multiple chips accessed in parallel as 64-bit data structures. MRDIMMs extend this design by enabling concurrent rank operation, allowing processors such as Intel Xeon and AMD EPYC to retrieve data more efficiently from each module.

ZDNet, citing JEDEC, reports that the industry body is now finalizing the design blueprint for second-generation MRDIMM, targeting speeds of 12,800 MT/s—equivalent to one million data transfers per second per MT/s. That marks roughly a 45% performance jump from first-generation MRDIMMs running at around 8,800 MT/s, according to the report.

(Photo credit: Lenovo)

Read more

- [News] JEDEC Previews LPDDR6 Enhancements, Develops SOCAMM2 Standard for AI Memory

- [News] JEDEC Reportedly Plans to Relax HBM Height Specs to ~900µm, Potentially Slowing Hybrid Bonding Adoption

(Photo credit: Micron)