Crossing AI Memory Wall: Storage Layer Reallocation and HBF Analysis

Last Modified

2026-04-23

Update Frequency

Not

Format

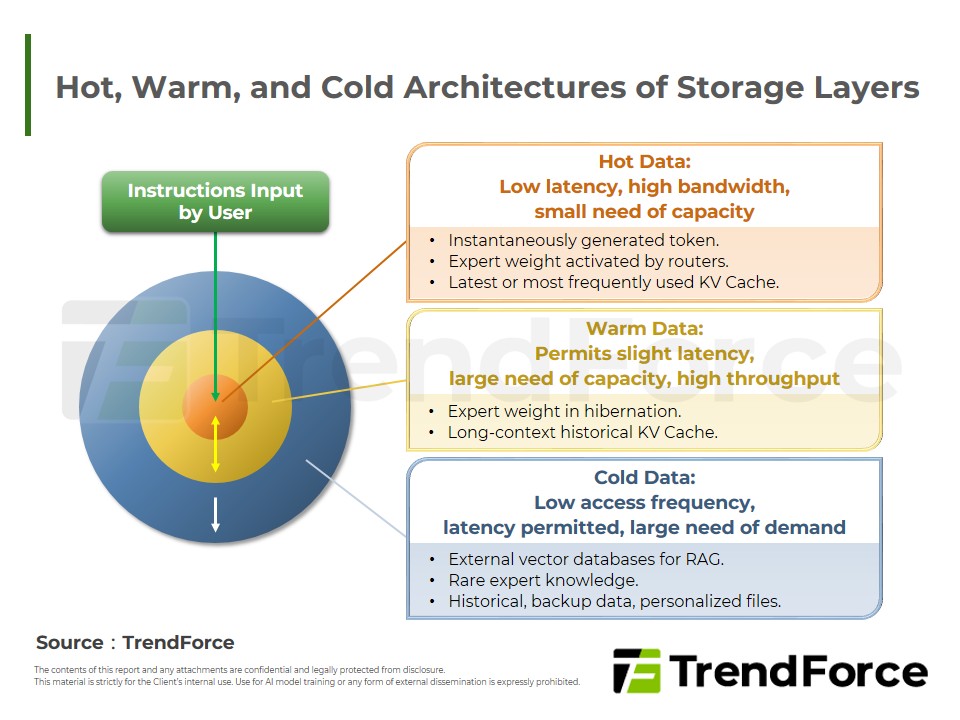

In AI inference, MoE architectures and long-context processing have sharply increased memory-capacity requirements for model weights and KV cache, shifting the bottleneck from insufficient compute to limited memory capacity. As warm data grows rapidly, this will drive a restructuring of the storage hierarchy, where HBM will handle hot data, while HBF will carry warm data to optimize cost–performance. However, commercialization of HBF still needs to overcome challenges in advanced packaging processes and the inherent characteristics of NAND flash.

Key Highlights

- Bottleneck: AI advancements shifted the bottleneck from compute power to memory capacity.

- Hierarchy: Surging warm data demands tiered storage: HBM for hot data and HBF for warm, maximizing cost-efficiency.

- HBF Hurdles: Commercialization requires overcoming advanced packaging and NAND flash limitations.

Table of Contents

- Development Bottlenecks of LLM: Impact on Computing Structures by Transformation of Model Architectures

- Figure 1: Features of MoE

- Figure 2: Deployment Strategies among AI Storage Vendors

- From Computing Bottlenecks to Restructuring of Storage Layers

- Figure 3: Hot, Warm, and Cold Architectures of Storage Layers

- Figure 4: “H³” Architecture

- Table 1: Comparison between HBM and HBF

- TRI’s View

<Total Pages: 13>

Category: Semiconductors

Spotlight Report

-

AI Reshapes Memory: Market Revenue to Peak by 2027

2026/01/20

Selected Topics

PDF

-

Smartphone Storage: AI Driven Growth - 2026

2026/03/18

Selected Topics

PDF

-

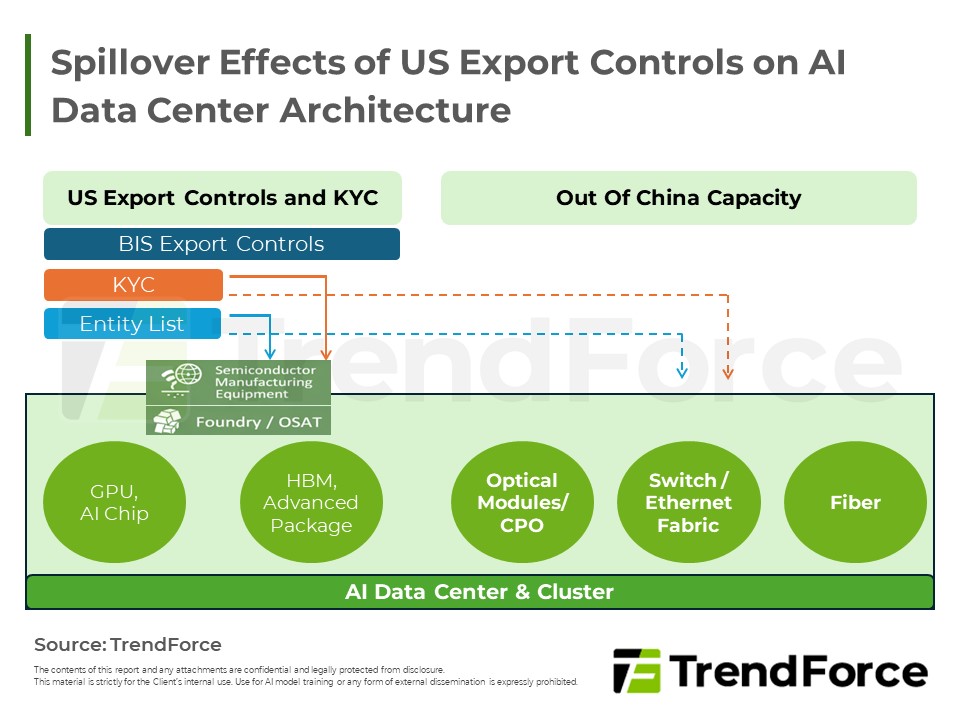

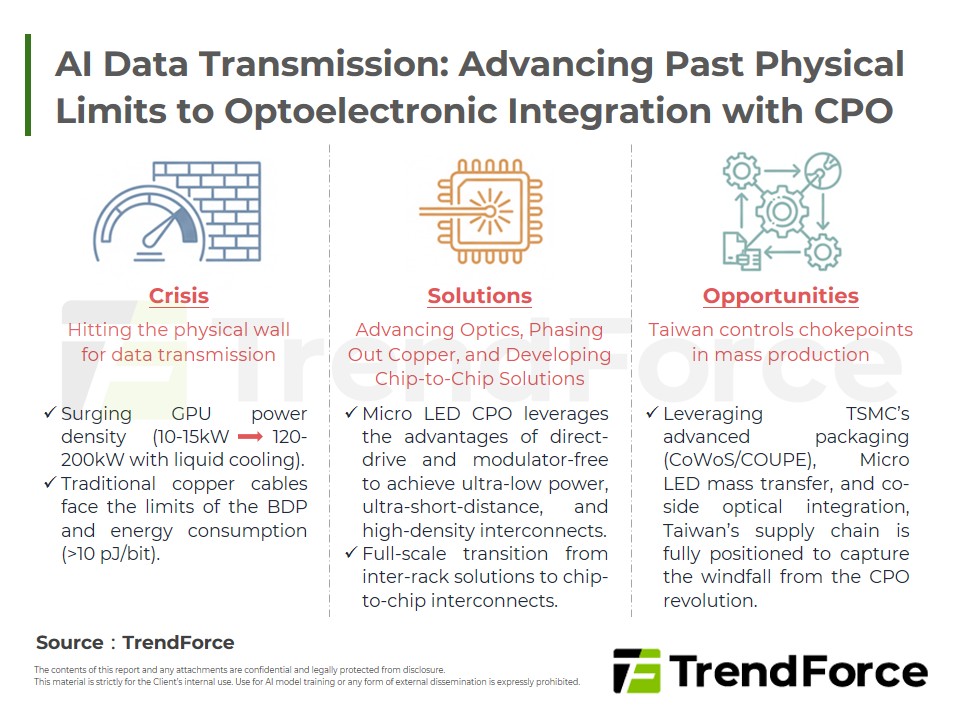

Optical Interconnect Supply Chain Restructuring and Opportunities in Response to Expanded US Export Controls

2026/04/30

Selected Topics

PDF

-

CSP CapEx Fuels 12.8% Server Growth: 2026 Forecast

2025/12/18

Selected Topics

PDF

-

Smartphone Production May Drop Over 15%: 2026 Memory Surge Ignites Cost Storm

2026/01/23

Selected Topics

PDF

-

Server DRAM Price Surge: Long-Term Strategy & Capacity Race - From Q4 2025

2025/11/12

Selected Topics

PDF

Selected TopicsRelated Reports

Download Report

2,000

Membership

- Selected Topics New

- Selected Topics-182_Crossing Over AI Memory Wall: Reallocation of Storage Layers and Analysis on HBF New

Spotlight Report

-

AI Reshapes Memory: Market Revenue to Peak by 2027

2026/01/20

Selected Topics

PDF

-

Smartphone Storage: AI Driven Growth - 2026

2026/03/18

Selected Topics

PDF

-

Optical Interconnect Supply Chain Restructuring and Opportunities in Response to Expanded US Export Controls

2026/04/30

Selected Topics

PDF

-

CSP CapEx Fuels 12.8% Server Growth: 2026 Forecast

2025/12/18

Selected Topics

PDF

-

Smartphone Production May Drop Over 15%: 2026 Memory Surge Ignites Cost Storm

2026/01/23

Selected Topics

PDF

-

Server DRAM Price Surge: Long-Term Strategy & Capacity Race - From Q4 2025

2025/11/12

Selected Topics

PDF