Popular Keywords

- About Us

-

Research Report

Research Directory

Semiconductors

LED

Consumer Electronics

Emerging Technologies

- Selected Topics

- Membership

- Price Trends

- Press Center

- News

- Events

- Contact Us

- AI Agent

About TrendForce News

TrendForce News operates independently from our research team, curating key semiconductor and tech updates to support timely, informed decisions.

- Home

- News

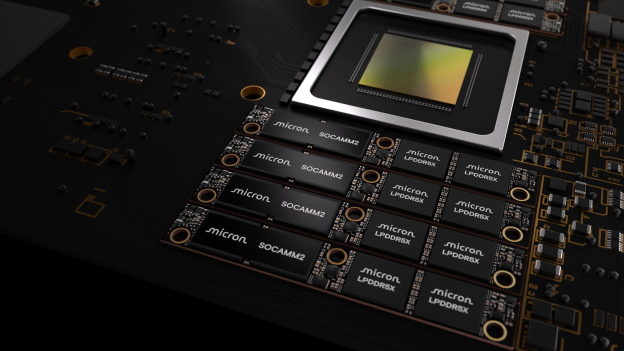

[News] SOCAMM War Heats up: Micron Ships 256GB SOCAMM2 Samples, Topping Industry Capacity

Shortly after confirming that it had begun sampling its 192GB SOCAMM2 last October, Micron has moved a step further. According to a new press release, the company has started shipping customer samples of a 256GB SOCAMM2 — currently the highest-capacity LPDRAM module in the industry.

Micron said the module is built on the industry’s first monolithic 32Gb LPDDR5X die, cutting power consumption to one-third of standard RDIMMs while shrinking the footprint to just one-third the size. In addition, performance also sees a sharp uplift, with the module delivering 2.3x faster time-to-first-token in long-context LLM inference and achieves three times better performance per watt in standalone CPU deployments.

According to the U.S. memory giant, capacity scales further as well, offering 1.33x more memory per module — enabling up to 2TB of LPDRAM on an 8-channel server CPU platform for AI and HPC workloads.

Micron also highlighted that against the backdrop of the convergence of AI training, inference, agentic AI and general-purpose compute, LPDRAM serves as a cornerstone solution for both AI and core compute servers in increasingly power and thermally constrained data center environments. The company is collaborating with NVIDIA on advanced memory for next-gen AI infrastructure, while leading the JEDEC SOCAMM2 standard and maintaining close technical partnerships with system designers, it said.

SOCAMM Gains Traction Beyond NVIDIA

Hankyung explains that LPDDR DRAM chips were once installed individually “onboard” next to the CPU to aid processing. SOCAM changes the game by combining four LPDDR DRAMs into a single, finger-sized substrate tucked beneath the module. The Elec adds that NVIDIA initially developed its SOCAMM standard for AI servers. Its Grace CPUs with LPDDR5X target low-power AI servers and are deployed on the GB300 platform alongside a Blackwell GPU.

However, as highlighted by The Elec, SOCAMM2 is the next-gen standard now moving through formal JEDEC ratification. Once approved, adoption will extend beyond NVIDIA, the report says, adding that industry observers expect LPDDR-based modules to gain real traction in AI servers after 2026. For instance, Microsoft has formally confirmed plans to deploy LPDDR5X in data center architectures, as per the report.

Memory Giants Race to Roll Out SOCAMM2

Against this backdrop, major memory vendors — Samsung Electronics, SK hynix, and Micron — have already initiated SOCAMM2 development and unveiled early prototypes, The Elec reports. Samsung, according to The Elec, confirmed that it has developed SOCAMM2, an ultra-compact, compressed removable memory module, and has begun shipping samples to NVIDIA.

Notably, Hankyung reports that NVIDIA is poised to place orders totaling 20 billion Gb of SOCAMM modules with DRAM suppliers. Samsung Electronics is expected to secure the largest share at roughly 10 billion Gb. Of the remaining volume, SK hynix is projected to take 60–70%, with the balance going to Micron, the report says.

Read more

- [News] SK hynix Debuts 16-Layer 48GB HBM4 at CES 2026, Alongside SOCAMM2 and LPDDR6

- [News] SOCAMM2 War Heats Up: Samsung Reportedly Delivers Samples to NVIDIA, Ramping Early 2026

(Photo credit: Micron)