Popular Keywords

- About Us

-

Research Report

Research Directory

Semiconductors

LED

Consumer Electronics

Emerging Technologies

- Selected Topics

- Membership

- Price Trends

- Press Center

- News

- Events

- Contact Us

- AI Agent

AI server

News

[News] TSMC Faces Capacity Shortage, Samsung May Provide Advanced Packaging and HBM Services to AMD

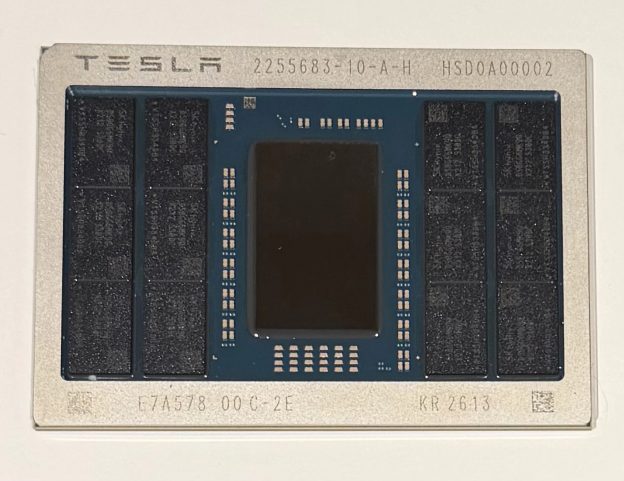

According to the Korea Economic Daily. Samsung Electronics' HBM3 and packaging services have passed AMD's quality tests. The upcoming Instinct MI300 series AI chips from AMD are planned to incorporate Samsung's HBM3 and packaging services. These chips, which combine central processing units (CPUs), ...

Press Releases

Server Supply Chain Becomes Fragmented, ODM’s Southeast Asia SMT Capacity Expected to Account for 23% in 2023, Says TrendForce

US-based CSPs have been establishing SMT production lines in Southeast Asia since late 2022 to mitigate geopolitical risks and supply chain disruptions. TrendForce reports that Taiwan-based server ODMs, including Quanta, Foxconn, Wistron (including Wiwynn), and Inventec, have set up production bas...

News

[News] Dell’s Large Orders Boost Wistron and Lite-On, AI Server Business to Grow Quarterly

Dell, a major server brand, placed a substantial order for AI servers just before NVIDIA's Q2 financial report. This move is reshaping Taiwan's supply chain dynamics, favoring companies like Wistron and Lite-On. Dell is aggressively entering the AI server market, ordering NVIDIA's top-tier H100 c...

News

[News] Taiwan Server Supply Chain Wistron, GIGABYTE will be benefit from UK’s AI Chip Purchase

Following Saudi Arabia's $13 billion investment, the UK government is dedicating £100 million (about $130 million) to acquire thousands of NVIDIA AI chips, aiming to establish a strong global AI foothold. Potential beneficiaries include Wistron, GIGABYTE, Asia Vital Components, and Supermicro. P...

In-Depth Analyses

New TrendForce Report Unveils: Rising AIGC Application Craze Set to Fuel Prolonged Demand for AI Servers

In just a short span of six months, AI has evolved from generating text, images, music, and code to automating tasks and producing agents, showcasing astonishing capabilities. TrendForce has issued a new report titled "Surge in AIGC Applications to Drive Long-Term Demand for AI Servers." Beyond high...

- Page 6

- 8 page(s)

- 38 result(s)