[News] Micron Says AI Still in Early Stage as Memory Demand Reportedly Seen Exceeding 50% of Total Market This Year

Micron Technology CEO Sanjay Mehrotra said the memory supply crunch is only beginning as AI demand continues to grow. According to Wccftech, citing an interview with CNBC, he noted that AI remains in its early stages and that ongoing advances will require not only faster but also greater amounts of memory. He added that as inference scales, token demand will rise, driving the need for both higher-capacity and higher-performance memory to fully realize AI’s capabilities.

Notably, he emphasized that the issue extends beyond pricing, as memory supply remains extremely tight and cannot be ramped up easily—a trend already reflected in the company’s results.

Micron notes that demand across both traditional and AI servers remains strong but is constrained by tight DRAM and NAND supply. Against this backdrop, AI demand for DRAM and NAND could surpass 50% of the industry’s total addressable market (TAM) this year.

In line with this trend, Financial Times adds, citing analysts, that the supply crunch is unlikely to ease before 2028, given the time required to build new fabrication plants.

In particular, according to South Korean outlet Global Economic, as shipments of 12-layer HBM4 for next-generation AI GPUs such as Vera Rubin begin to ramp in earnest, more cautious forecasts suggest the industry may meet only around 60% of DRAM demand by 2027. This dynamic could bolster pricing power for memory makers, but it also carries a double-edged risk—if mass production fails to scale in time, their market leadership could come under pressure.

Micron Advances Next-Gen Memory Roadmap

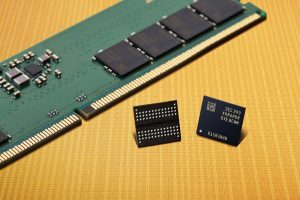

Amid tightening supply, Micron’s latest product roadmap highlights its push in next-generation memory. According to Wccftech, Micron is supplying 36GB (12-Hi) HBM4 DRAM for NVIDIA’s Vera Rubin platform. The company is also advancing its next-generation HBM4E memory, with volume ramp expected next year. On the LPDDR side, Micron recently introduced a 256GB SOCAMM2 solution based on LPDDR5X modules, offering capacities of up to 2TB. It is also providing DDR5 memory for NVIDIA’s Groq 3 LPX, while the Groq LPU is capable of supporting up to 12TB of memory per chip.

Meanwhile, Wccftech reports that on the consumer side, Micron expects PC and smartphone shipments to decline in the low double-digit range, pressured by tight supply and rising prices. The company also notes that 32GB is increasingly becoming the standard configuration for PCs running agentic AI workloads locally.

Read more

- [News] Micron Reportedly Urges Tighter U.S. Chip Equipment Curbs on China; Toolmakers Seek Relief

- [News] PSMC Raises DRAM and NAND Prices in Mar–Apr; Micron and Intel EMIB Programs Reported to Advance

(Photo credit: Micron)