2026 NVIDIA AI Outlook: From GPU to LPU Racks & Inference

Last Modified

2026-03-17

Update Frequency

Aperiodically

Format

NVIDIA expands its AI factory via integrated GPU, CPU, and LPU racks for training and inference, securing its market lead.

Key Highlights

- Hardware Racks: Shifts to integrated GPU, CPU & LPU racks, mastering both AI training and low-latency agentic inference.

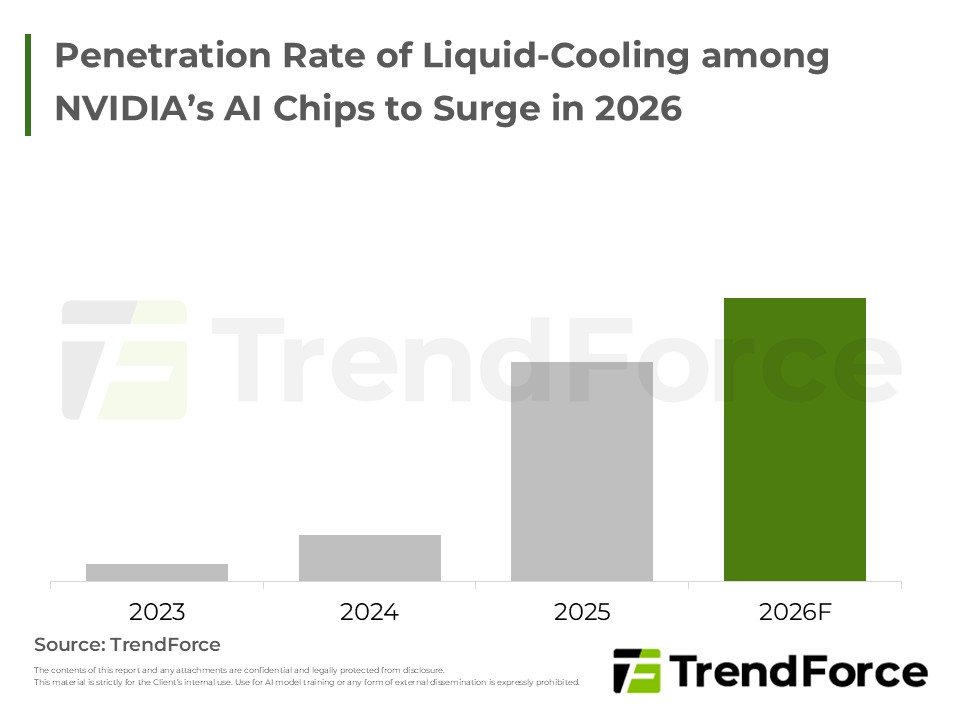

- Architecture: Pioneers disaggregated inference, liquid cooling, and copper/optical CPO networks to shatter bandwidth limits.

- Defense: Counters custom cloud chips via deep software-hardware lock-in, cementing its AI dominance.

Table of Contents

- Introduction

- NVIDIA's Product Roadmaps to Segment into GPUs, CPUs, and LPUs when Targeting the AI Market

- Vera Rubin Rack Expected to Gradually Replace GB300 after Release in 2H26

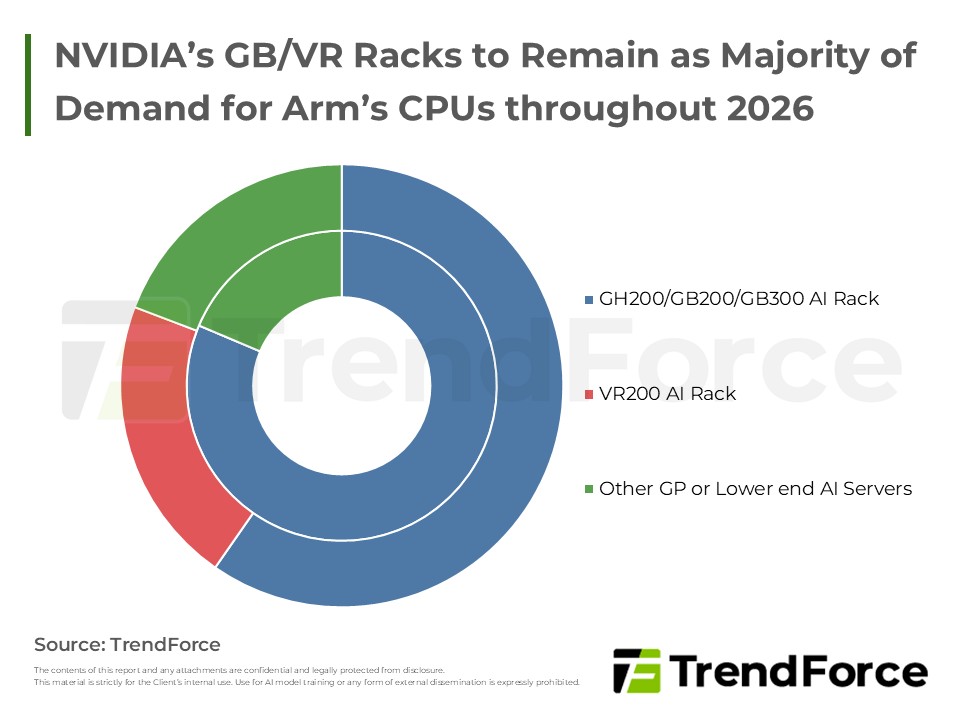

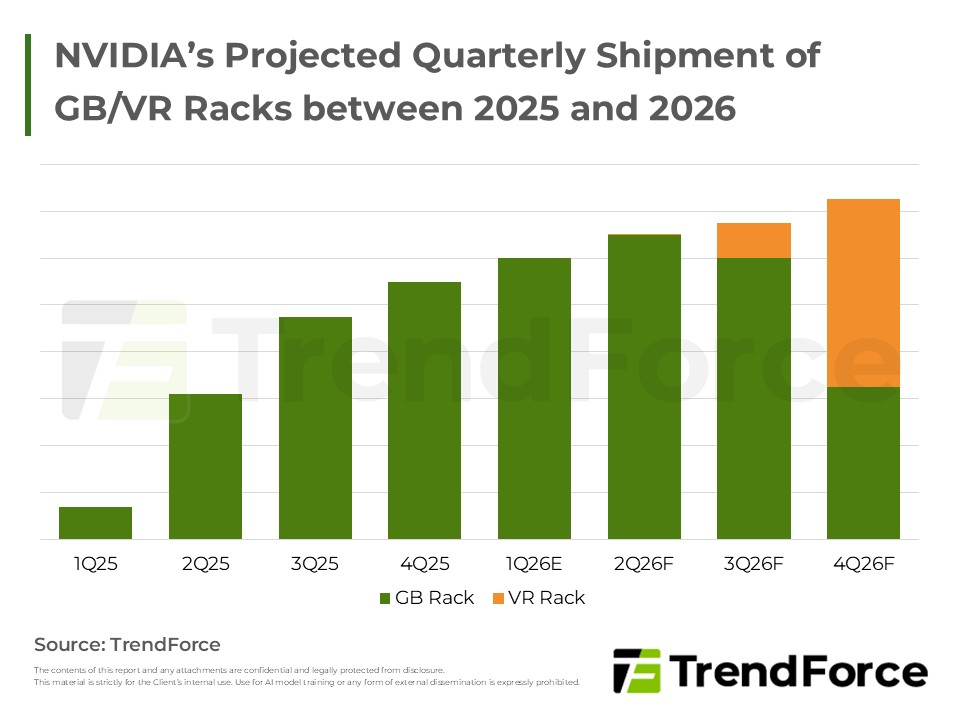

- NVIDIA's Projected Quarterly Shipment of GB/VR Racks between 2025 and 2026

- NVIDIA Also Proposed Vera CPU to Strengthen Agentic AI Infrastructures

- NVIDIA Introduces the LPU as a Core Component in Its AI Disaggregated Inference Architecture

- NVIDIA Introduces Disaggregated Inference LPX Rack Design That Is Distinct from VR Rack

- NVIDIA's Diverse Next-Generation Chips Will Underpin Future Memory Growth

- NVIDIA Leverages CPO to Enhance High-Speed Interconnect Performance of Rack-Scale AI Chip Solutions and Whole Rack Systems

- Overview of NVIDIA's Chip Generations and Rack Interconnect Architectures

- Conclusion: Facing the Challenge of Rising Market Share for CSPs' In-House ASICs, NVIDIA Pushes Integrated Rack Solutions Across CPUs, GPUs, and LPUs to Accelerate Its Expansion from AI Training into Inference

<Total Pages: 10>

Category: AI/HBM/Server

Spotlight Report

-

AI Reshapes Memory: Market Revenue to Peak by 2027

2026/01/20

Semiconductors

PDF

-

Micron to Acquire PSMC’s Tongluo P5 Fab to Contribute to DRAM Supply for 2027

2026/01/18

Semiconductors

PDF

-

DRAM Monthly Datasheet Apr. 2026

2026/04/16

Semiconductors

EXCEL

-

HBM Industry Analysis - 1Q26

2026/02/04

Semiconductors

PDF

-

NAND Flash Monthly Datasheet Apr. 2026

2026/04/15

Semiconductors

PDF

-

2Q26 Memory Price Forecast

2026/03/26

Semiconductors

PDF

AI Server PackageRelated Reports

Download Report

45,000

Membership

Spotlight Report

-

AI Reshapes Memory: Market Revenue to Peak by 2027

2026/01/20

Semiconductors

PDF

-

Micron to Acquire PSMC’s Tongluo P5 Fab to Contribute to DRAM Supply for 2027

2026/01/18

Semiconductors

PDF

-

DRAM Monthly Datasheet Apr. 2026

2026/04/16

Semiconductors

EXCEL

-

HBM Industry Analysis - 1Q26

2026/02/04

Semiconductors

PDF

-

NAND Flash Monthly Datasheet Apr. 2026

2026/04/15

Semiconductors

PDF

-

2Q26 Memory Price Forecast

2026/03/26

Semiconductors

PDF