AI Interconnect Outlook: NVIDIA Leads the Transition to CPO and Silicon Photonics Architectures

Last Modified

2026-04-16

Update Frequency

Aperiodically

Format

NVIDIA shifts AI competition from computing performance to system interconnects, leveraging CPO and silicon photonics to break I/O bottlenecks. By integrating high-speed fabric with advanced packaging, it redefines data center efficiency and accelerates the optical supply chain transition.

Key Highlights

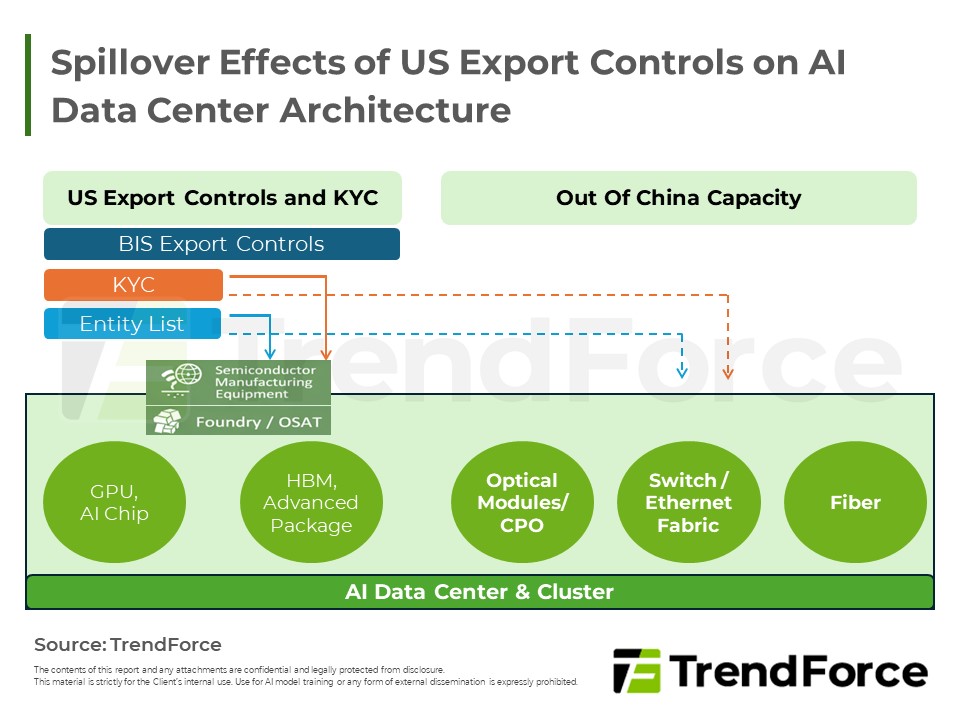

- I/O Bottleneck: AI performance now hinges on interconnect capacity rather than raw compute, as traditional copper wiring hits physical limits in power, reach, and signal integrity.

- CPO Necessity: Co-Packaged Optics shortens electrical paths to reduce SerDes power and latency, offering a practical path to sustainable high-bandwidth scaling beyond 1.6T.

- System-Level Fabric: By integrating silicon photonics and advanced switches, NVIDIA treats entire racks as a single, massive GPU, optimizing data flow from chip to backbone.

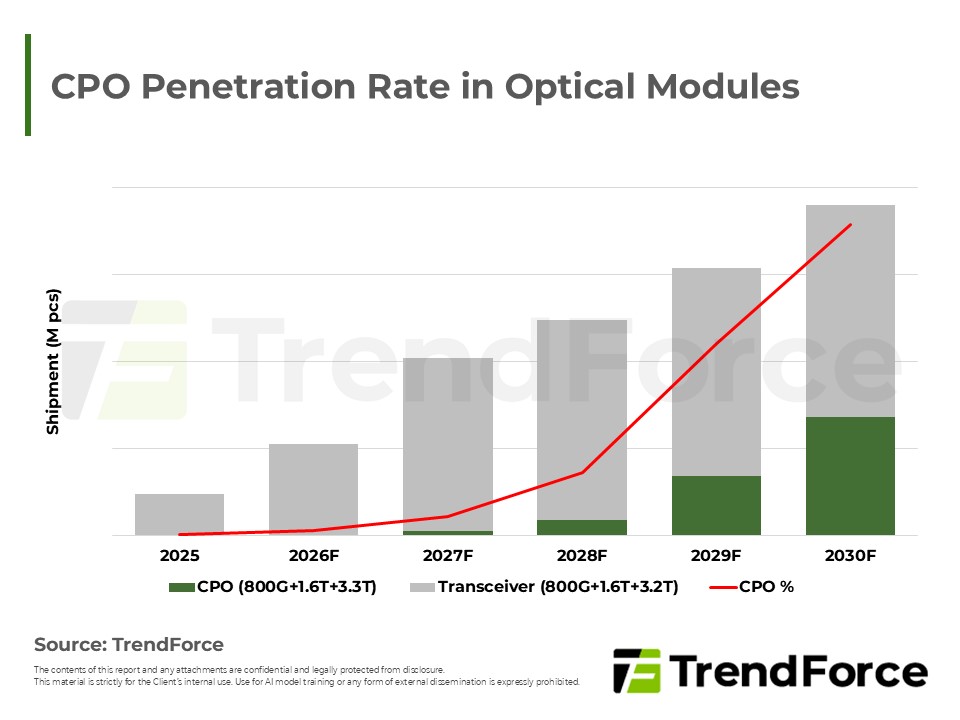

- Market Trajectory: Adoption will accelerate with future generations, shifting the supply chain focus toward silicon photonics and high-density fiber arrays as CPO penetration rises.

Table of Contents

- TrendForce’s View

- CPO Penetration Rate in Optical Modules

- NVIDIA Fabric Architecture

- NVIDIA Scale-Out Fabric

- Differences between Pluggable Optical Modules and CPO

- Overview of NVIDIA’s GPU Generations and Rack Interconnect Architectures

- DWDM Photonics For Interconnects

- Example – 200Gbps vs. 32Gbps circuitry

- Optics on Interposer w/ DWDM

- Optics on Interposer

- Spectrum-X Photonics delivers 64x better signal integrity

- Overview of NVIDIA’s Three-Layer Interconnect Architecture

< Total Pages: 13>

Category: AI/HBM/Server

Spotlight Report

-

DRAM Monthly Datasheet May 2026

2026/05/19

Semiconductors

EXCEL

-

NAND Flash Monthly Datasheet May 2026

2026/05/15

Semiconductors

PDF

-

2Q26 Memory Price Forecast

2026/03/26

Semiconductors

PDF

-

DRAM Industry Analysis-1Q26

2026/03/05

Semiconductors

PDF

-

DRAM Contract Price Apr. 2026

2026/04/30

Semiconductors

PDF

-

DRAM Contract Price Mar. 2026

2026/03/31

Semiconductors

PDF

AI Server PackageRelated Reports

Download Report

12,000

Membership

Spotlight Report

-

DRAM Monthly Datasheet May 2026

2026/05/19

Semiconductors

EXCEL

-

NAND Flash Monthly Datasheet May 2026

2026/05/15

Semiconductors

PDF

-

2Q26 Memory Price Forecast

2026/03/26

Semiconductors

PDF

-

DRAM Industry Analysis-1Q26

2026/03/05

Semiconductors

PDF

-

DRAM Contract Price Apr. 2026

2026/04/30

Semiconductors

PDF

-

DRAM Contract Price Mar. 2026

2026/03/31

Semiconductors

PDF