[News] Meta’s In-House AI Chip Push: Four New Accelerators Gear Up Amid Memory Crunch by 2027

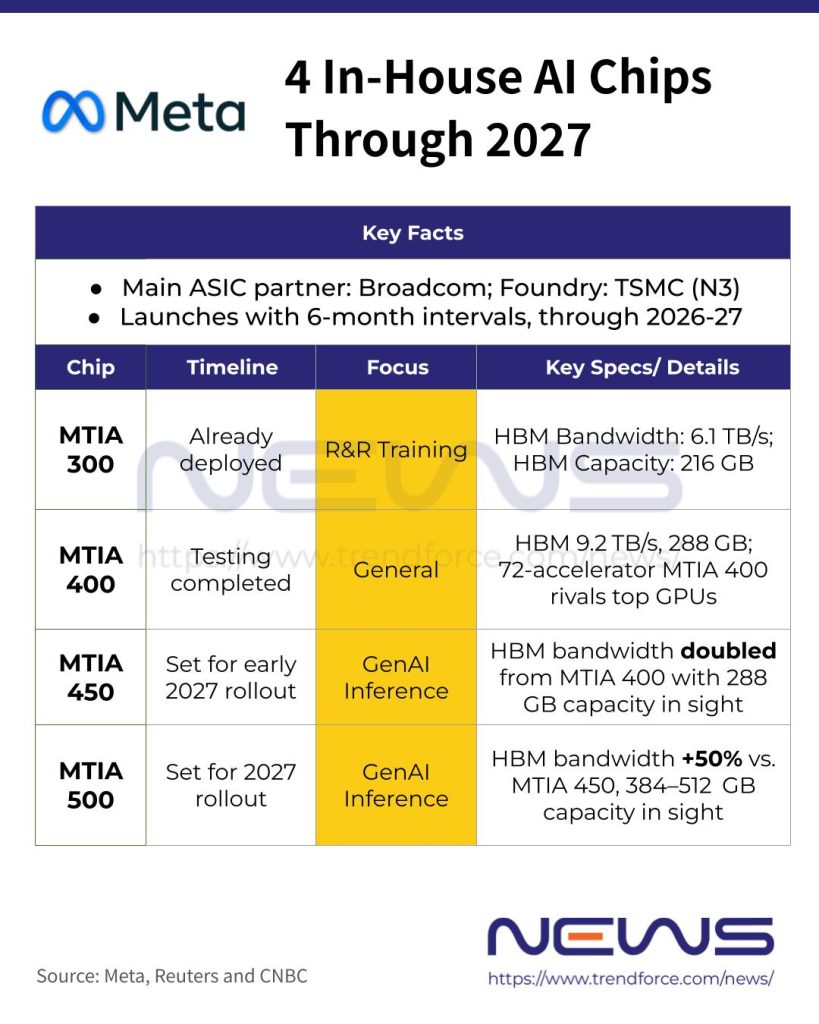

Shortly after securing major chip supply deals with NVIDIA and AMD in February, Meta Platforms unveiled a roadmap for four internally developed chips through 2027. The company plans to introduce them at roughly six-month intervals, with each designed for an expected lifespan of more than five years, according to Reuters and CNBC.

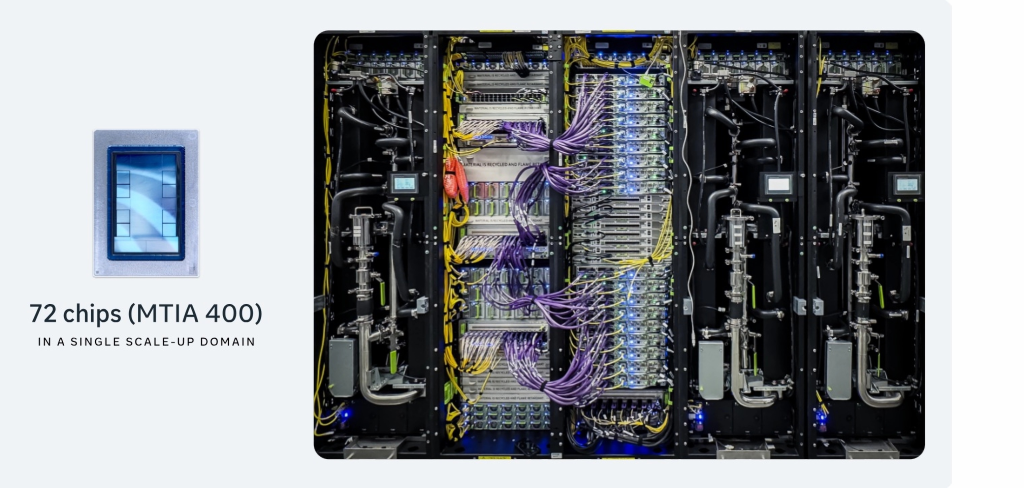

The social media giant, as per Reuters, has already deployed MTIA 300, the first of its four in-house AI chips, to run its ranking and recommendation systems. Following that, that MTIA 400 has completed testing and is now “on the path to deployment” across the company’s data centers, CNBC reports.

According to CNBC, the upcoming lineup—MTIA 400, MTIA 450, and MTIA 500—is aimed at more advanced generative AI inference workloads, including generating images and videos from users’ text prompts. However, these chips are not intended for training massive large language models.

As highlighted by Reuters, Meta has seen some traction with its inference-focused chips, but its long-standing push to develop a generative AI training processor—capable of building the large-scale models that underpin modern AI applications—has proven more challenging.

Notably, yahoo! finance, citing Meta, said MTIA 400 is the company’s first in-house accelerator to combine cost efficiency with performance on par with leading commercial products—rivals in this space largely coming from NVIDIA and AMD.

Amid Meta’s in-house silicon push, its long-time design and manufacturing partners, Broadcom and TSMC, are expected to be the major beneficiaries, as noted by Reuters and CNBC.

(Photo credit: Meta)

Memory Constraints Loom

It is also worth noting that Meta’s upcoming MTIA chips will feature increasing amounts of high-bandwidth memory (HBM) to power generative AI inference workloads. CNBC highlights that this ambitious chip roadmap comes amid a wider memory shortage in the tech industry, raising potential supply chain challenges for future deployments.

Meta’s Vice President of Engineering, Yee Jiun Song, told CNBC the company is “absolutely worried about HBM supply,” but added that it believes enough has been secured to support its planned chip deployments.

Song wouldn’t comment on whether the company has signed longer-term contracts with memory vendors to protect against the shortage, but said that Meta has a “diversified” approach to its supply chain and silicon strategy, according to CNBC.

Meta, according to its press release, targets 288 GB of HBM capacity for both MTIA 400 and MTIA 450, compared to 216 GB on the MTIA 300. The MTIA 500 is set to go further, reaching 384–512 GB.

Read more

- [News] Meta’s MTIA-3 AI Chip Reportedly Tipped for 2H26 Debut, Built on TSMC 3nm with GUC Support

- [News] Meta Reportedly Weighs Google TPU Deployment in 2027, Boosting Broadcom, Taiwan’s GUC

(Photo credit: Meta)