[News] Huawei Ascend, Cambricon and Hygon Completed Day 0 Adaptation to DeepSeek-V4

Recently, DeepSeek released a preview version of DeepSeek-V4 and simultaneously open-sourced its model weights. This marks a significant technological upgrade: two model variants, million-token context length, and ultra-low inference cost, which all draw strong industry attention. Notably, Chinese AI chip vendors including Huawei Ascend, Cambricon, Hygon Information, and Moore Threads completed Day 0 adaptation on the day of release.

Industry observers note that such “model-ready-on-launch” capability was previously exclusive to NVIDIA. The synchronized rollout by Chinese AI chips signals a transition from “lagging adaptation” to “simultaneous deployment,” highlighting closer synergy between large models and Chinese domestic compute infrastructure.

DeepSeek-V4: Million-Token Context and Dual-Model Strategy

The DeepSeek-V4 series comprises two models. DeepSeek-V4-Pro features 1.6 trillion parameters, with 49 billion activated per inference, targeting top-tier proprietary models and designed for complex reasoning, agent-based applications, and long-context processing. DeepSeek-V4-Flash, with 284 billion parameters and 13 billion activated, emphasizes cost efficiency and is optimized for high-concurrency, lightweight scenarios.

Technically, DeepSeek-V4 natively supports a 1 million-token context window. Leveraging hybrid attention mechanisms (CSA + HCA) and sparse attention (DSA), it significantly reduces computational and memory overhead, thereby lowering inference costs. The model has already been adapted to Ascend 950 chips; with mass deployment of Ascend 950 supernodes expected in the second half of the year, pricing for V4-Pro is likely to decline further while service throughput improves.

DeepSeek-V4 demonstrates strong performance in coding and mathematical benchmarks. It outperforms many proprietary models in platforms like LiveCodeBench and Codeforces, with mathematical reasoning approaching leading levels. The model also shows solid performance in agent-based tasks, particularly for simpler scenarios.

DeepSeek-V4 supports API formats compatible with OpenAI and Anthropic, while offering significantly lower pricing than proprietary alternatives. With open-sourced weights and support for local deployment, it also addresses data security requirements. Going forward, DeepSeek plans to further enhance agent capabilities and narrow the performance gap with proprietary models.

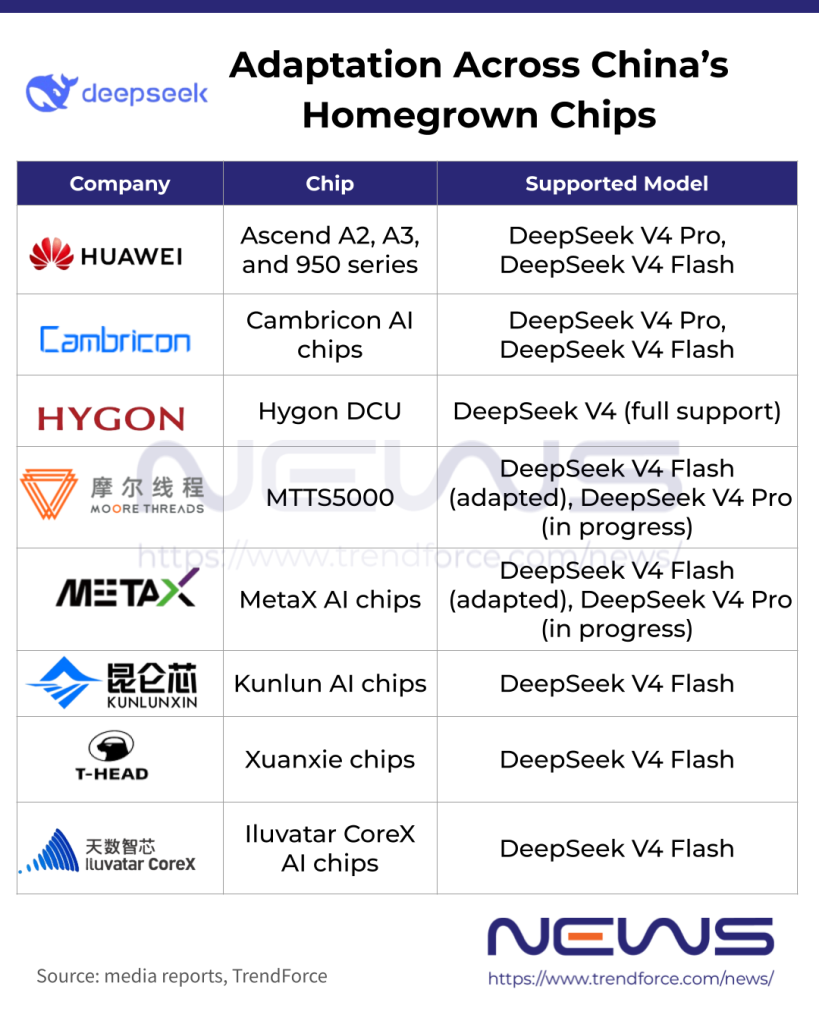

Broad Day 0 Adaptation Across Domestic AI Chips

On the day of release, multiple domestic chipmakers achieved Day 0 adaptation. This means full-stack compatibility, performance optimization, and stability validation were completed upon launch, enabling immediate deployment without delay. Historically, only NVIDIA could achieve this, with other GPUs typically lagging by months. DeepSeek-V4’s launch marks the first time domestic chips have collectively reached this milestone.

Huawei Ascend: Full product lineup including Ascend A2, A3, and 950 supports both V4-Pro and V4-Flash. Ascend 950 leverages fused kernels and multi-stream parallelism to reduce attention computation and memory access overhead, significantly improving inference efficiency. Combined with quantization techniques, it enables high-throughput, low-latency deployment. Ascend supernodes also provide training reference implementations for rapid fine-tuning.

Cambricon: Completed Day 0 adaptation based on the vLLM inference framework, with adaptation code open-sourced on GitHub, supporting both V4 variants.

Hygon Information: Its DCU (Deep Computing Unit) platform achieved Day 0 adaptation and conducted in-depth model optimization, forming a closed loop from “model release to chip adaptation to industrial deployment,” with ready-to-use solutions.

Moore Threads: On April 24, in collaboration with the Beijing Academy of Artificial Intelligence, Moore Threads completed Day-0 inference adaptation for both models based on its flagship MTT S5000 AI training-inference card and FlagOS full-stack software. Pro and Flash images were released on the ModelScope community, providing turnkey domestic deployment solutions.

Additional vendors completing adaptation include Metax Technology (full adaptation and deployment for V4-Flash), Baidu Kunlunxin, T-Head Semiconductor (under Alibaba Group), and Iluvatar CoreX, all completing Day 0 enablement of V4-Flash. These efforts collectively cover the mainstream Chinese AI chip ecosystem.

(Photo credit: DeepSeek)