[News] NVIDIA Jetson Thor and Robotics: The Real Challenge of Multimodal AI

Multimodal Perception and Reasoning Bring Physical AI Closer to Reality

Over the past few years, large language models (LLMs) have demonstrated AI’s strong logical capabilities in the digital world. Today, however, the core challenge of artificial intelligence is no longer merely about scaling model parameters, but about enabling AI to evolve from an on-screen consultant into a real-world workforce. This transition has become the defining value proposition behind the rise of Physical AI.

Physical AI emphasizes real-time computing capabilities in the physical world, controllable motion, and long-term operational stability. By deeply interacting with the environment through diverse sensing systems and learning from these interactions, Physical AI gradually builds an understanding of the real world—forming a closed-loop architecture that can “see the scene, understand instructions, and execute actions.” This leap from virtual bits to physical atoms relies heavily on advances in multimodal model technologies and has become a core battleground for industry competition heading into 2026.

The cross-modal reasoning capabilities of multimodal models enable deep integration across visual foundation models, Vision-Language-Action (VLA) models, and LLM-based decision layers. This allows Physical AI systems to understand continuous events and causal relationships during operation—such as identifying objects, interpreting semantic meaning, inferring action paths, and adjusting movements in real time.

Take a household robotics scenario as an example: the command “carry the soup to the living room” is not simply about moving an object. It implicitly requires preventing spills, avoiding obstacles, and maintaining balance. Tasks like these demand coordinated operation among multiple models, forming a context-aware decision chain. As application scenarios and deployment environments grow increasingly complex, the demand for real-time computing power to run multimodal models continues to rise.

High Computing Power Is No Longer Just for Show: The Emergence of NVIDIA Jetson Thor

In the Physical AI domain, computing power is no longer about performance showmanship or brute-force demonstrations. Instead, it represents the fundamental reflexes required for intelligent agents—such as robots—to survive and operate in real-world environments. A millisecond-level delay may simply feel like slower loading in the digital world, but in the physical world, it can result in collisions or safety incidents.

As the operational core, multimodal models face the challenge of processing massive volumes of data from multiple sensors in real time while ensuring deterministic outcomes. Moreover, much of this workload must be handled at the edge, as cloud-based processing cannot meet stringent requirements for safety and response latency. This creates a need for hardware that simultaneously delivers AI performance, low power consumption, and high reliability.

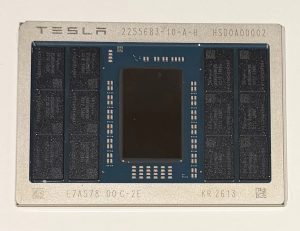

Against this backdrop, NVIDIA has introduced the NVIDIA Jetson AGX Thor, an integrated computing platform designed specifically for Physical AI applications such as robotics and autonomous systems. This high-end AI processor integrates sensing, inference, and control within a unified architecture. Its substantial computing capability enables it to handle high-level semantic reasoning alongside low-level motion control simultaneously.

This architecture allows Physical AI systems to run large-model inference and high-frequency control loops while meeting functional safety and real-time requirements. NVIDIA Jetson Thor also highlights NVIDIA’s advantage in the Physical AI era—namely, its tight hardware–software co-design spanning CUDA, ecosystem support, and robotics development frameworks. From an industry perspective, the significance of NVIDIA Jetson Thor lies in lowering the deployment barriers for multimodal systems and accelerating the transition of Physical AI from demonstrations to mass production.

Autonomy as the Next Frontier: Edge Becomes the Main Battleground

With humanoid robots expected to enter household applications as early as 2026, concerns around physical safety, data privacy, and transmission latency are becoming increasingly critical. As a result, the trend toward edge deployment of Physical AI is becoming more pronounced.

Current Edge AI applications have already evolved from single-modality visual recognition to real-time interactive scenarios that integrate speech, vision, and other data sources. Across public services, smart retail, and industrial collaboration, industrial PCs (IPCs) and embedded platforms are increasingly becoming the core carriers of multimodal models. Vendors are no longer focused solely on showcasing raw computing power, but rather on delivering real-world applications and integrated hardware–software solutions—from sensing modules and model deployment to on-site operations and maintenance.

This shift is also driving edge devices to incorporate Agentic AI architectures, enabling systems to complete perception, decision-making, and action locally. The combination of NVIDIA Jetson Thor and multimodal models equips robots with sharp senses and precise motion control. However, even if robots can see their environment and understand commands, their responses may still lack true autonomous decision-making capabilities.

The next phase of Physical AI development therefore centers on intelligent agents that can understand goals, decompose tasks, and dynamically adjust strategies. In this architecture, sensing systems capture physical data, multimodal models handle semantic and physical understanding, and agents are responsible for making decisions—forming intelligent edge devices capable of autonomous action.

This marks AI’s transition from a passive executor to an active actor. NVIDIA Jetson Thor lays the foundation for real-time computation, while agents address higher-level behavioral intelligence such as intent understanding and autonomous planning. Seeing, hearing, and moving are merely the starting point of Physical AI; the true inflection point arrives when AI systems can understand objectives and independently decide how to act.

(Photo credit: FREEPIK)