[News] China Inference GPU Firm Sunrise Secures Over RMB 1B in Funding, Valuation Reportedly Exceeds RMB 10B

China’s AI inference GPU sector is seeing fresh momentum. According to ifeng.com, China-based AI inference GPU company Sunrise has completed a new funding round exceeding RMB 1 billion, bringing its valuation above RMB 10 billion and becoming the first unicorn in China’s pure-play inference GPU segment.

This marks one of the largest single funding rounds in China’s GPU sector as AI demand increasingly shifts toward inference in 2026. To date, Sunrise—spun off from Chinese AI giant SenseTime—has completed seven funding rounds, with total financing reaching approximately RMB 4 billion.

The funds from this round will be primarily used for the mass production and delivery of the next-generation Qiwang S3 inference GPU, the development of a full-stack software ecosystem, and the continued R&D iteration of subsequent S4 and S5 chips, the report adds.

Sunrise S3 Targets Inference Efficiency with LPDDR6 Architecture

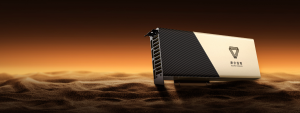

As the report notes, Sunrise officially unveiled its flagship Qiwang S3 inference GPU in January 2026. It is China’s first inference GPU to adopt LPDDR6 memory while remaining compatible with LPDDR5X. Rather than following the HBM-based approach used in high-end training GPUs, the chip is designed around the core requirements of agent-based inference, with a full-stack redesign spanning the AI Core architecture and memory I/O system.

This architectural shift reflects the distinct memory demands of inference workloads. As noted by Leiphone.com, in mainstream cloud scenarios characterized by high concurrency and long context lengths, the KV cache can account for more than 80% of total memory usage. The S3’s LPDDR6-based design not only provides sufficient inference bandwidth, but also increases memory capacity while reducing power consumption by 50%, aligning with the core requirements of large capacity, cost efficiency, and low power consumption.

At the compute layer, according to ifeng.com, the S3 addresses a key limitation of general-purpose GPUs, where computing resources are often underutilized. Its inference performance is reported to be five times higher than the previous-generation S2, with a target of reducing token costs by 90%.

In large language model inference, GEMM (general matrix multiplication) and attention operations account for over 90% of total compute workload. The S3 is designed to push utilization of these core operators to around 99% for GEMM and 98% for Flash Attention, significantly improving overall efficiency, as ifeng.com notes.

Highlighting the company’s focus on the inference segment, Sunrise Chairman Xu Bing, as cited by ifeng.com, said AI inference demand is expected to reach four to five times that of training in 2026, with inference computing rental prices rising by nearly 40% over the past six months. He added that the company has progressed through three generations of inference GPUs and achieved mass production of tens of thousands of units.

Read more

- [News] China GPU Race Intensifies: Cambricon Turns Profitable in 2025; Moore Threads, MetaX Narrow Losses

- [News] NVIDIA H100 Rentals in China Reportedly Up 20%–30% as Token Demand Surges, H-Series Also Rises

(Photo credit: Sunrise)