[News] Decoding Google’s TurboQuant: 6x KV Cache Cut—Headwind for Memory Players?

A new Google research breakthrough is sending ripples through the memory sector, with claims that it can sharply reduce memory consumption without any loss in model accuracy. According to Tom’s Hardware, citing a company blog post, Google’s TurboQuant—a training-free compression method—can cut KV cache memory usage by at least six times.

The tech targets one of the key memory bottlenecks: the KV cache. As explained by Tom’s Hardware, KV caches store previously computed attention states so models do not need to recompute at each token generation step. However, as context windows grow, traditional vector quantization—while effective at reducing cache size—introduces additional overhead by requiring quantization constants to be stored alongside compressed values, the report notes.

Although small per entry, this overhead reportedly becomes significant at larger context scales. According to Google, TurboQuant removes this overhead through a two-step framework.

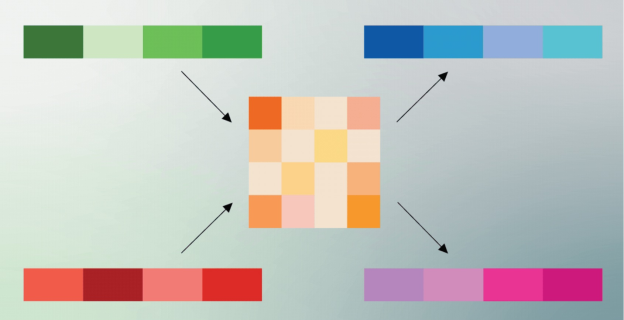

The first step, PolarQuant, randomly rotates the data vectors to simplify their geometric structure, making them easier to compress using a standard high-quality quantizer. Google explains that a quantizer is a method that converts a large range of continuous values (such as precise decimal numbers) into a smaller set of discrete values (such as integers), similar to techniques used in audio compression or JPEG image compression. After rotation, each part of the vector can be quantized separately in a more efficient way.

While the first stage accounts for most of the compression, the second stage uses a small portion of the remaining capacity to process the residual error left from the first step using the QJL algorithm. This stage functions as a mathematical error-correction layer, removing bias introduced during quantization and improving the accuracy of attention score calculations, Google says.

The performance is remarkable: according to Tom’s Hardware, in benchmarks on NVIDIA H100 GPUs, 4-bit TurboQuant achieved up to an 8x speedup in attention-logit computation compared with unquantized 32-bit keys, while cutting KV cache memory usage by at least sixfold.

According to Google, TurboQuant proved it can quantize the key-value cache to just 3 bits without requiring training or fine-tuning and causing any compromise in model accuracy, all while achieving a faster runtime than the original LLMs (Gemma and Mistral). The research results, released on Tuesday, are scheduled to be presented at ICLR (International Conference on Learning Representations) 2026 next month, Tom’s Hardware adds.

Bad News for Memory Players?

However, while some may worry about its impact on overall memory demand, analysts remain broadly positive on the technology. Morgan Stanley notes that it does not affect model weights (HBM usage on GPUs/TPUs) or training workloads. Instead, it allows systems to handle 4–8x longer context windows or significantly larger batch sizes on the same hardware without running out of memory. In other words, it is less about reducing total memory needs and more about improving efficiency.

On the other hand, Investing.com, citing Lynx Equity Strategies, also notes that while AI providers need to innovate to address bottlenecks as token context length increases in inferencing, this does not reduce demand for memory and flash over the next three to five years due to supply constraints.

Read more

(Photo credit: Google)